This week, the AI industry

lit itself on fire.

Here’s what it means for builders.

The Pentagon blacklisted an American AI company for the first time in history — and then used that same AI to bomb Iran the same day. A real estate VC called the home transaction “broken.” The Church is welcoming AI and ignoring it simultaneously. And chalk on a sidewalk became the most viral moment in tech. Here’s what’s actually happening — and what it means if you’re building.

The Pentagon Play: What Happened When One AI Company Said No

The federal government did something this week it has never done to an American company: it declared an AI lab a “supply chain risk.” That label has historically been reserved for Chinese telecom firms and foreign adversaries. This week, it landed on Anthropic — a company built in San Francisco, staffed by Americans, and funded by American capital.

Why? Because Anthropic refused to remove safety guardrails around mass surveillance and lethal autonomous weapons. The Pentagon wanted those tools unlocked. Anthropic said no. Secretary of Defense Pete Hegseth responded that technology should be available for “any lawful purpose.” Anthropic’s CEO Dario Amodei said they’d see the government in court.

Within hours, OpenAI stepped in and took the contract. Sam Altman later acknowledged on a public X thread: “the optics don’t look good.” That might be the understatement of 2026. A leaked Slack memo from Amodei described OpenAI’s framing of the situation as “mendacious,” “safety theater,” and “straight-up lies.” The two CEOs were reportedly photographed refusing to shake hands at an AI summit the same week.

Anthropic walked away from $200M on principle. They ended the week #1 in the App Store. That’s not a coincidence — that’s a brand telling the truth about what it is.

Here’s my take, and I’ll be straight with you: I land closer to “Anthropic did the right thing” — but with eyes open. I get both sides. OpenAI took a pragmatic position. Governments move slowly and sometimes safety guardrails do need to evolve for legitimate national security use. I understand the argument.

But I have a harder time ignoring the pattern. The federal government has a history of doing exactly this to disruptive technology. Not just under one administration — across all of them. They shelved technology when it threatened established power structures. They restricted it when they couldn’t control it. They called it a “risk” when they meant a threat to their own leverage. This isn’t political. It’s a behavior that predates partisanship and outlasts every election cycle.

What you refuse to compromise on defines your ceiling more than what you’re willing to do. Anthropic drew a line. The market — real users, real people — responded by downloading their product in record numbers and sending chalk art to their front door.

The most important business lesson this week wasn’t in the contract. It was in the App Store rankings. Conviction has a conversion rate. Principles aren’t a liability — they’re a positioning strategy that no competitor can copy, because they’d have to actually believe it.

One more thing that nobody is talking about: the Pentagon reportedly used Claude in the Iran strikes — hours after announcing the ban. If that’s accurate, it’s not just ironic. It’s a governance failure that should concern every person building AI tools for institutional use. The gap between policy and practice in government AI adoption is not a footnote. It’s the whole story.

The Broken Transaction: Why the Biggest VC in Proptech Just Put Their Chips on AI-Native

At Inman Connect New York this week, Fifth Wall CEO Brendan Wallace stood in front of the largest gathering of real estate professionals in the country and said something that should have landed harder than it did: the residential real estate transaction is “archaic, slow, and painful.”

Fifth Wall is the largest proptech-focused venture capital firm in the world. When they speak, the industry listens — or it should. Wallace wasn’t complaining about mortgage rates or inventory. He was diagnosing a structural problem. The way Americans buy and sell homes is broken at the process level, and AI is the fix — but only if it’s built from the ground up for that purpose.

The distinction he drew matters. Fifth Wall is no longer backing companies that bolt AI onto existing workflows. They’re betting on AI-native platforms — products where artificial intelligence isn’t a feature, it’s the foundation. Breezy, an AI-native agent workflow engine. Ridley, a fixed-price automated home-selling platform. Their filter for every investment now: “Does this technology reduce the cost of housing over time?”

Here’s why this matters to me personally: what Fifth Wall is describing — the AI-native intelligence layer that serves the buyer, not the transaction — is exactly what I’ve been building with HomeDataReports.

The home inspection industry tells you what’s inside the building. Title search tells you who owns it. Nobody tells you what’s outside — the flood zone, the wildfire probability, the environmental contamination, the neighborhood safety data, the risk scores that your lender already pulled and your insurer already priced before you signed anything. That information asymmetry is the broken transaction. It’s not about the process being slow. It’s about the fact that every other party at the closing table already has intelligence that the buyer doesn’t.

AI-native doesn’t mean “AI features.” It means the product couldn’t exist without AI. That’s a completely different company.

Wallace’s framing is worth sitting with if you’re a builder: are you building a product that uses AI, or a product that is AI? The former gets replaced in the next product cycle. The latter creates a category. The largest proptech VC just declared that the residential transaction is the biggest unsolved problem in the U.S. economy — and that the window to build first-mover AI-native solutions is open right now. That window doesn’t stay open.

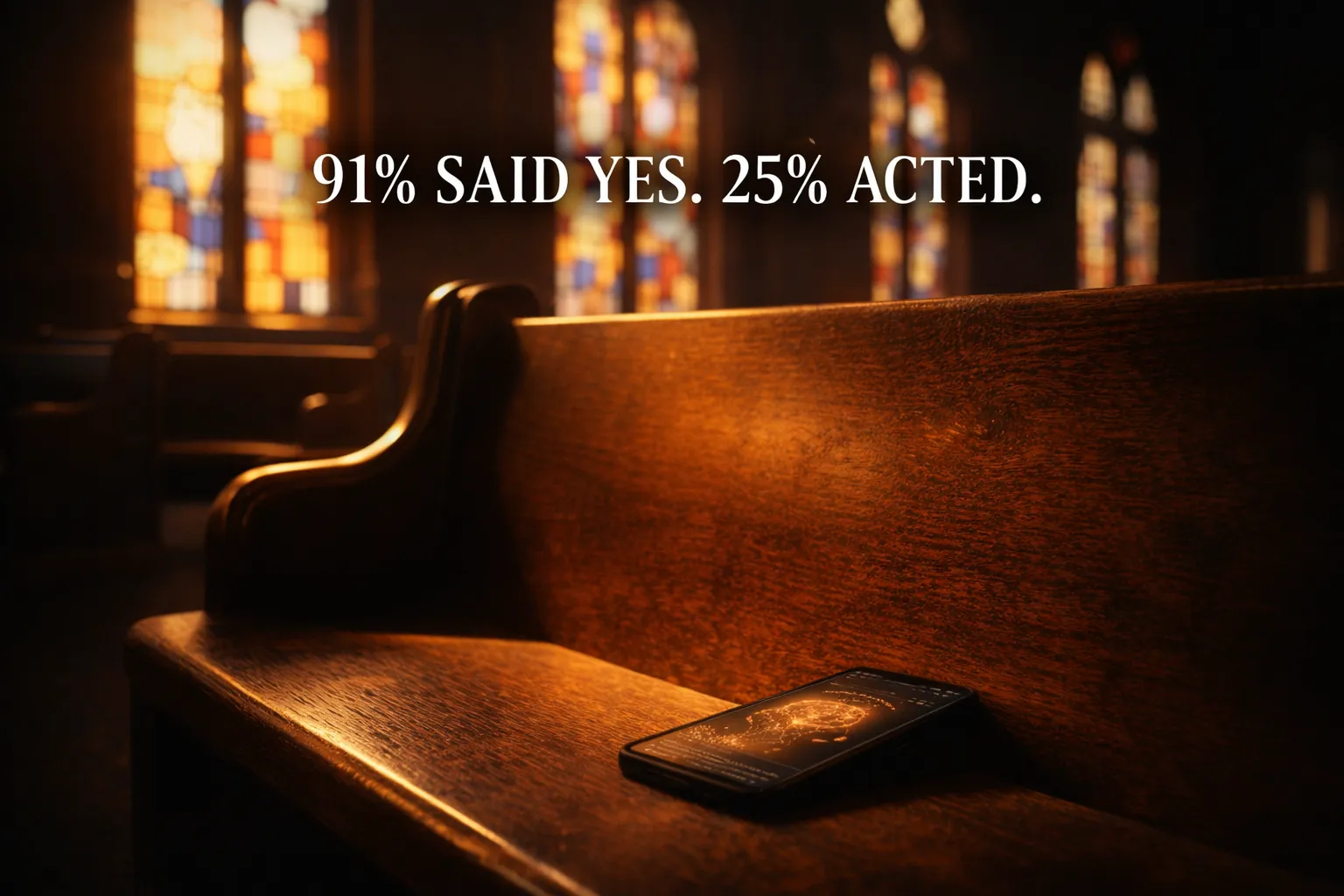

The 66-Point Gap: Why 91% of Church Leaders Said Yes to AI — and Only 25% Actually Meant It

The Hartford International Institute released survey data this week that I haven’t been able to stop thinking about. 91% of church leaders say they welcome AI in ministry. Fewer than 25% are using it for theological content — sermons, devotionals, discipleship curriculum.

That’s a 66-point gap between what people say they believe and what they actually do. In any other context, we’d call that a credibility problem. In the church, we call it familiarity. Because this isn’t the first time.

The printing press. The telegraph — whose first message was a Bible verse, sent by Samuel Morse, a man who gave God the credit openly. Radio, which Christians were among the first to broadcast on. Television, which Billy Graham used to reach 3.2 million souls over six decades. The internet, which gave us YouVersion — a billion installs, launched on day one of the App Store. Every single time, the same pattern: resistance, debate, adoption by the few who moved first, and then the majority following years later and calling it wisdom.

I want to be careful here, because I think the nuance matters. The hesitation isn’t entirely wrong. There’s a real question about what is irreplaceable in pastoral care — and the answer is presence. AI can draft a sermon. It cannot sit with someone at a graveside. It can help a small group leader prepare. It cannot mourn. It can provide information. It cannot intercede. That distinction is the moat for every faith-tech product built with integrity.

The same faith leaders praying for God to expand their reach are refusing the tool He handed them. Stewardship applies to technology too.

Kyoto University introduced a robot Buddhist monk this week, trained on scriptures, capable of performing religious counsel. Charisma Magazine’s response was a Christian warning: when spiritual authority is outsourced to machines, faith risks becoming transactional rather than relational. They’re right about the risk. They’re wrong if the solution is to ignore the tool entirely.

The survey also found something the headlines missed: ministries using digital tools reported higher spiritual vitality. Not lower. Not compromised. Higher. The correlation between faithful stewardship of technology and the health of a congregation is positive, not negative. That finding alone should reframe every board meeting where someone argues that staying offline is the more “holy” path.

The 66-point gap is not a survey finding. It’s a call to action. The leaders who figure out how to use AI wisely — not to replace relationship, but to extend it — will reach more people, disciple more people, and serve more people than the ones who waited until it was obvious. It was always obvious. We just had to decide whether we believed it.

#QuitGPT: When Chalk on a Sidewalk Becomes the Most Viral Moment in AI

Someone went to Anthropic’s office in San Francisco this week and wrote in chalk on the sidewalk: “You give us courage.”

That image went viral. Not a product launch. Not a benchmark announcement. Not a funding round. Chalk on concrete — in response to an AI company refusing a government weapons contract — became the most emotionally resonant moment in AI this week. Let that land.

The data underneath the moment is significant. A Reddit post titled “Cancel and Delete ChatGPT!!!” hit 30,000 upvotes. An Instagram account called “quitGPT” gained 10,000 followers within days of OpenAI’s Pentagon deal. On OpenRouter — a platform that routes AI queries across dozens of models — 12 different models are now outpacing OpenAI in usage. The top model? China’s MiniMax. OpenAI’s wallet share in enterprise is projected to slide from 62% to 53% by end of 2026.

I want to be analytically honest here, not just fired up: user sentiment and actual user behavior are two different things. Thirty thousand Reddit upvotes doesn’t mean thirty thousand cancellations. People who tweet “I’m leaving Twitter” are usually still on Twitter. The #QuitGPT movement is meaningful as a signal, not necessarily as a migration. Most professional users who built workflows on ChatGPT are not going to rebuild them this week because of a Pentagon contract.

But here’s what is real: the monoculture is over. For the last two years, “AI” meant OpenAI in the mainstream. That’s breaking apart faster than anyone projected. Claude is now #1 in the App Store. Google employees are signing letters in support of Anthropic — their direct competitor. The AI market is fracturing into values camps, not just capability tiers.

Values-driven brands don’t need ad budgets. They need moments of genuine conviction — and the nerve to actually hold the line when it costs something.

This has direct implications for anyone building. The question isn’t just “which AI model do I use?” anymore. It’s “which AI company’s values do I want embedded in my product?” That’s a new question. A year ago, most builders didn’t ask it. Now it shows up in investor decks, onboarding flows, and apparently in chalk on a San Francisco sidewalk at 2 AM.

The AI Cold War also picked up a genuinely strange new front line this week. Anthropic publicly accused DeepSeek, Moonshot AI, and MiniMax of running 24,000 fake accounts to extract Claude’s capabilities through distillation — 16 million+ conversations used to essentially steal the AI’s reasoning patterns without paying for training. OpenAI made similar allegations to Congress. The AI equivalent of industrial espionage is now a congressional hearing topic. We’re in territory that has no precedent, and the rulebook is being written in real time.

What I keep coming back to is this: the companies that win the next five years won’t necessarily be the ones with the most parameters or the biggest context window. They’ll be the ones that a meaningful segment of users genuinely trust. Trust is built slowly. It’s destroyed fast. And this week showed that users are paying attention to how these companies behave when it costs them something — not just when it’s convenient to be principled.

Anthropic was principled when it cost them $200M. That’s the kind of behavior that builds the trust chalk art gets made about.

Want to work with someone who builds

while the dust is still settling?

I work with founders and operators navigating real decisions in AI — strategy, architecture, and execution. No theory. No slide decks. Real builds, real clarity.